Natural Language Processing (NLP) is a field within Artificial Intelligence (AI) that allows machines to parse, understand, and generate human language. This branch of AI can be applied to multiple languages and across many different formats (for example, unstructured documents, audio, etc.).

Considering that the NLP market is anticipated to be worth $13.4 billion in 2020, it is worth delving deeper into this field of AI.

This article seeks to explain first how NLP works, followed by how it is used, and what the future looks like for this exciting area of AI.

“The NLP market is anticipated to be worth $13.4 billion.”

How Does NLP Work?

Most NLP nowadays is delivered using machine learning (ML) techniques. It is, however, worth looking at the more traditional methods first. Traditional techniques reveal the foundation on which NLP is based and is still applied in specific scenarios today.

Traditional NLP

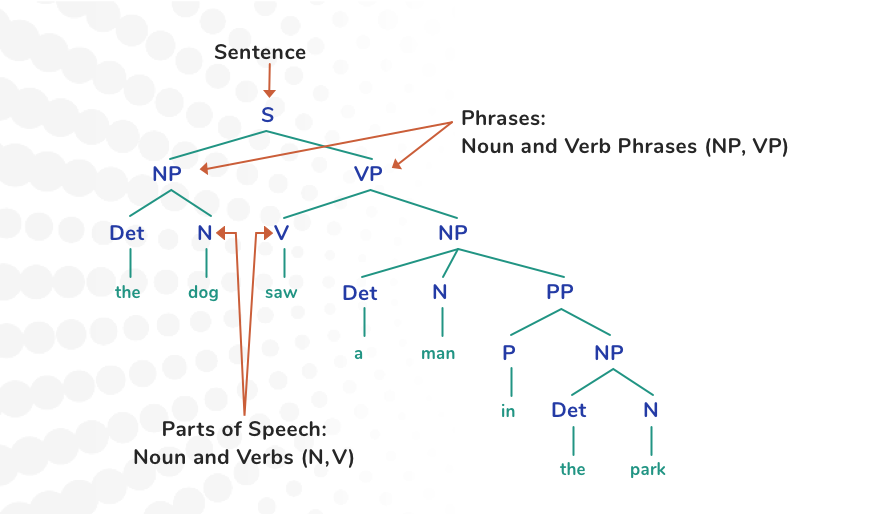

Traditional NLP attempts to deconstruct sentences into smaller phrases, which in turn are broken down into parts of speech. To illustrate this concept, take the simple sentence:

“The dog saw a man in the park."

Traditional NLP techniques would syntactically deconstruct the above sentence as follows:

This approach uses a technique called Part of Speech Tagging (POS), which categorizes words into different types (that is, verb, noun, adjective, etc.). Classification in this way is not always straightforward, and other supplementary techniques such as Lemmatization1 and Segmentation2 are used (besides others). Building from this categorization process, the semantics or meaning of the sentence is then derived.

Deriving semantics can be achieved using a number of techniques from linguistic rules or techniques such as Named Entity Recognition (NER). NER involves using a domain model to help filter domain entities that are relevant to the semantic process from the non-relevant ones. NER usually involves an extensive database of domain objects and attributes, although advances have been made with statistical approaches for entity recognition.

While useful for proof of concepts, traditional NLP approaches are very labor-intensive, requiring a lot of developer time and effort. Machine learning has thus evolved as the de facto mechanism for NLP development.

Machine Learning

Unlike traditional NLP techniques, machine learning does not need to be explicitly programmed; instead, it is trained on examples (called training data). The learning aspect of machine learning means that the solutions get better over time as they are exposed to more and more examples. In other words, they continue learning and get better as more data becomes available.

There are many types of ML, each best suited to different types of problems. The most common type of ML used for NLP applications is called Recurrent neural networks (RNN). RNNs (which fall within a category of ML called deep learning) are popular in this field, given their ability to handle sequential data.

Understanding the sequence of sentences gives us the context of what is being said. As humans, we consider the context or the order of the sentences spoken and rarely try to understand them in isolation. If we take the following sentences, it is easy to see why context makes such a huge difference to the meaning:

“Tim’s dog died. Tim went for a walk. John said Tim was very sad after speaking to him.”

It is clear why Tim is sad (his dog died). We do not misconstrue it as “Tim is sad because John spoke to him.”

Given that RNNs are good at handling sequences, they are well-suited for NLP applications. We are, of course, oversimplifying RNNs in this article; there are other notable variants, for example, Gated Recurrent Units (GRU) and Long Short-Term Memory (LSTM).

Typical NLP systems use RNN or its variants as building blocks for creating things called encoders and decoders (explained further below) for specific problems. The state-of-the-art variation for such systems is called Attention-based Sequence-to-Sequence-Networks.

A sequence-to-sequence network, also called a transformer network, consists of two components: an encoder and a decoder. Broadly speaking, the encoder first “understands” the input sentence and then represents this as something called a vector. This vector is then passed to the decoder which ''maps''3 this to an output.

This method has proved to be highly successful in language-related applications, including machine translation and video captioning (or creating subtitles). BERT, a transformer network from Google, has made significant improvements in this direction.

“BERT helps better understand the nuances and context of words in searches and better match those queries with more relevant results.”

The caveat, however, in using ML (particularly, deep learning approaches) is that a large amount of data is needed to train the system. Also, the training time, even with GPUs and TPUs, can become days. The other concern is the lack of accountability; what you have at the end is a “black box” with no clear ''audit trail.'' How important accountability is, depends on the nature of the solution and the risks associated with its application. Areas of healthcare, for example, have a higher risk with wider-reaching implications than particular retail applications.

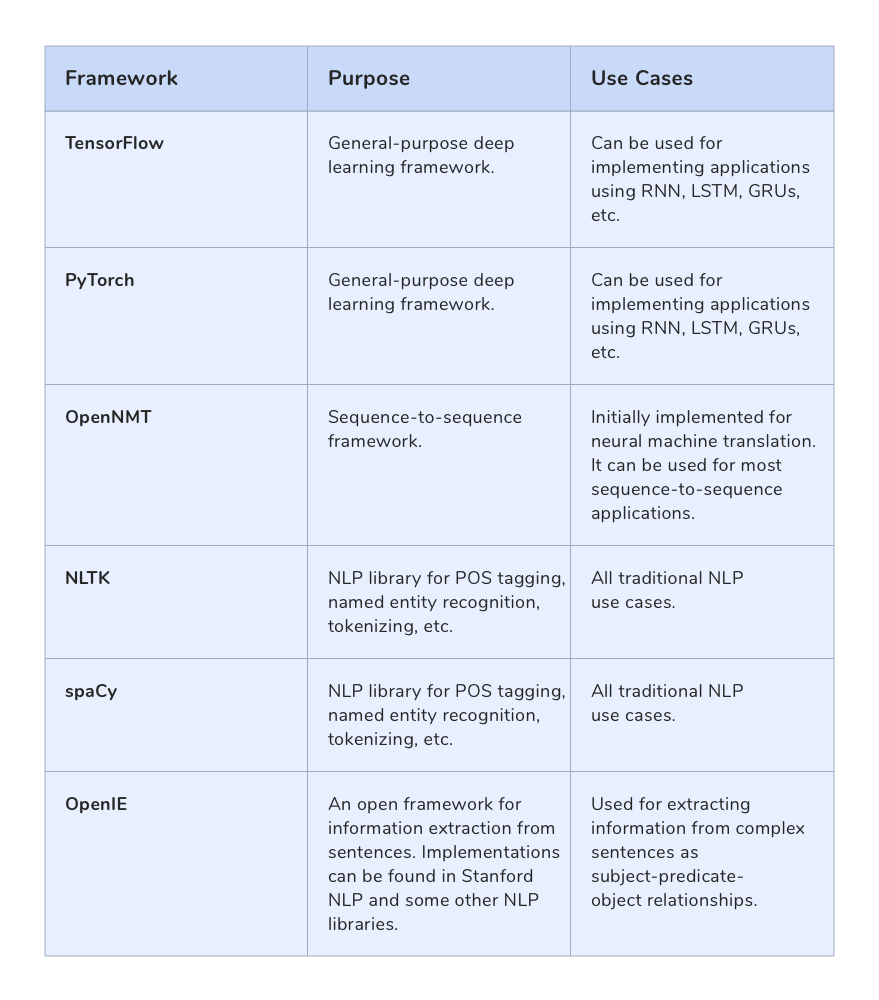

Note: For reference, the appendix at the end of this article lists the common NLP frameworks (both traditional and ML).

How NLP Is Used

The more common uses of NLP include spelling and grammar checkers in text editors (such as word processors and web forms) and spam detection in email software.

Other common uses include CV filtering by recruiters and language translation.

"Google Translate supports over 100 languages at various levels, and … serves over 500 million people daily."

NLP, however, is making a massive impact in many other areas of business.

Listed below are just a few of the areas in NLP that continue to evolve and provide significant benefits to companies.

Customer Engagement

Improving customer engagement can have a massive impact on a company's finances, and more efforts are being made to leverage technology to achieve this. An obvious use of AI in this space is chatbots and personal assistants. We have all been frustrated by clunky chatbot interactions on websites that do little to endear us to the company whose site we are visiting. Many improvements have been taking place in this space, however, to improve the customer experience.

One of the more successful techniques employed here is sentiment analysis. Sentiment analysis attempts to extract from the text not what is written per se but the person's feelings or sentiments behind the actual statement.

If we take the following statements:

"I experienced a terrible response and was not happy with the service."

"I can't thank you enough for such a great product, and I am delighted with it."

Both indicate different sentiments (angry and happy). Sentiment analysis uses different NLP techniques to categorize such statements according to the writer's attitude or emotion. By recognizing the tone, chatbots can respond accordingly and help towards replacing the “clunky” interaction with more human-like and purposeful responses.

The financial rewards for businesses that can successfully implement chatbot technology are huge. Swedbank, a bank based in Sweden with branches in the U.S., was handling over 3.6 million annual customer interactions through its 700 contact centers. Following the successful implementation of chatbot technology, the bank was able to automate over 30,000 customer conversations per month with a 78% first contact resolution rate. Coca Cola has also reported handling 15,000 and 30,000 conversations a month with this technology.

“[Swedbank]…..was able to automate over 30,000 customer conversations per month with a 78% first contact resolution rate.”

Monitoring Trends

The ramifications for a piece of software that can monitor human conversations and comments to create observations that can be used to assist business decisions are enormous.

Not only can NLP be used to proactively search customer comment repositories to address issues proactively but also to monitor social media to determine brand reputation.

There are around 600M+ tweets per day and over 500,000+ Facebook comments posted every minute. The potential for a piece of software to deliver business insight to companies from this vast repository is unlimited.

“There are around 600M+ tweets per day and over 500,000+ Facebook comments posted every minute.”

Getting Personal

Although some crossover exists, chatbots mimic human conversation while personal assistants4 understand a command and act on it. Obvious contenders here are Siri, Alexa, and Google Assistant. Personal assistants can be used to perform several tasks from playing media to setting alarms, online shopping, plus many more.

In its AI innovation report, Deloitte has estimated an increase to $9.9 billion by 2021 for the workflow and management system marketplace. If we then consider that personal assistants help automate tasks and workflow, the potential is obvious.

“Deloitte has estimated an increase to $9.9 billion by 2021 for the workflow and management system marketplace.”

Simple examples of personal assistants for business include Microsoft's Cortana for Enterprise. Cortana automates several tasks, including:

- Setting reminders

- Sending emails and texts

- Managing calendar

- Opening applications

Of course, there are many alternatives to Cortana, which is used here as an example.

More sophisticated uses of personal assistants include automating aspects of sales, HR, and marketing. Examples include:

- Conversica: Salesforce personal assistant

- Drift: Personal assistant that filters leads

- Intercom: Personal assistant for managing sales leads

- Mya Systems: Personal assistant for managing recruitment conversations

The above list provides a few examples of where NLP is effectively delivering value. Many more exist.

For SMEs with a lack of appetite\budget for custom AI development, there also exist mechanisms for integrating with Twitter and Facebook's personal assistants. These initiatives allow businesses to maximize their social media interactions through easy-to-follow practices and drag-and-drop UIs.

Question and Answer Generation

Education is essential as well as costly. Advances in NLP have now made it possible to generate test questions from course material saving tutors’ time and money.

Classifying and Summarizing Documents

The ability to automatically sift through a large number of documents and categorize them brings great benefits. Examples include annotating vast amounts of medical records or summarizing (as abstracts) archived case notes in a legal firm.

Document Generation

Document generation presents NLP with many application uses. Examples include the generation of reports from sales data or summarizing electronic staff performance records.

Convergence Is Key

A convergence of technologies is where the real momentum is achieved. If we look at AI in general, it has gained momentum since its origins in the 50s because of the convergence of enablers such as cloud computing and big data. The same principle can be observed with NLP. If we look at how NLP is merging with technologies such as voice and image recognition, we can see NLP's use elevate significantly. Some examples are listed below.

- SignAll is an app that combines image processing and NLP to convert sign language to text, helping deaf people communicate with those who don't know sign language.

- At Columbia University, researchers have mixed sentiment analysis with image recognition to analyze the “emotionality” of social media postings. Intended applications include prevention of gang violence and shootings.

- Woebot, a digital therapist connected to Facebook, looks for signs of depression and anxiety in people that may indicate an emergency.

What Does the Future Hold?

The authors believe that NLP will be one of the main disruptive influencers in the technology space. To illustrate how this will unfold, it is useful to refer to Gartner's report on the Top 10 Strategic Technology Trends for 2020 and three points in particular:

Democratization of Expertise

AI will play an essential role in the dissemination and distribution of expertise. NLP has already made significant steps in enabling knowledge transfer and educational activities, and it will continue to do so.

Human Augmentation

We discussed earlier in this article how advances in image and voice recognition are increasingly becoming integrated with NLP techniques. This is a marriage that will endure and be vital for useful human augmentation advances.

Transparency and Traceability

AI is infiltrating our personal and business life with assisted and automated decision-making taking on an ever-growing role. With decision-making, however, comes accountability and the need to explain how decisions are derived. This point is particularly pertinent in a world that demands transparency and openness. “Explainable AI” will need to become more efficient, and no longer will it be ok for the “black box” to make “unaudited” decisions. NLP will play a crucial role in being able to communicate how conclusions are derived via a language-centric experience.

“…..no longer will it be ok for the “black box” to make decisions. NLP will play a crucial role in being able to communicate how conclusions are derived via a language-centric experience.”

Other predicted growth areas include:

Assisted Diagnosis

Diagnosis is a huge investment area for the healthcare industry and it will continue to dominate many funding agendas. NLP will be used to aid the decision-making process of practitioners by retrieving relevant information (for example, past medical reports from voice-activated commands on demand).

Intelligent Personal Assistants

With an increased understanding of the complexities of language such as slang, mispronunciations, accents, and sarcasm, NLP will be able to facilitate more smart assistants. These intelligent assistants will be able to capture multiple tasks from continuous dialogue and interact with planning software to create and maintain recurring work packages over a long period.

Searching Unstructured Data Smartly

Voice activation will enable users to search across many different data types including video, audio, and poorly structured documents. By combining NLP with other technologies such as image classification, it will be possible, for example, for architects to get defect reports automatically generated from construction plans.

Final Thoughts

As a natural progression of its integration with our everyday life, AI will continue to evolve to be more human-like. However, for us to fully embrace a technology that can automate and assist, AI will need to meet and exceed our communication expectations and abilities. Such expectations involve sight, sound, and hearing, and NLP will clearly play a prominent driver either as a primary or supporting technology in this mix.

NLP techniques and business uses will continue to grow, as too will its integration with other technologies. Businesses wishing to keep ahead in the digital age should, therefore, include NLP as a strong contender within their AI adoption strategy.

Appendix A: Common NLP Frameworks

Footnote:

- Returns a word to its root form; for example, “walking” becomes “walk”.

- A method of further dividing words into smaller functional units.

- The word ''map'' very much simplifies this complex operation, which is much more than one-to-one mapping.

- While personal assistants comprise more than the comprehension (NLP) aspect, it is nevertheless an essential part and hence relevant to this article.

About the Authors

Simon Chambers is the Managing Director and Co-Founder of New Leaf Technology Solutions, where he enjoys indulging his passion for AI. His specific interests include AI in diagnosis, NLP, and the convergence of symbolic and adaptive AI techniques.

Ravi Padmaraj is an Architect at QBurst, where he has worked on many data science and data engineering projects for diverse industries. His primary areas of interest are natural language processing and image processing.